Kling 3.0 vs HappyHorse 1.0: A Production-First Comparison (Quality, Control, Audio, API)

Kling 3.0 vs HappyHorse 1.0: how to choose fast (without trusting hype)

If you’re searching Kling 3.0 vs HappyHorse 1.0, you probably want a simple answer: which model is “better”.

In production, “better” depends on what you’re shipping:

- a short-form ad loop (TikTok / Reels / Shorts)

- a product-demo clip you’ll iterate weekly

- a creator workflow where audio and pacing matter

- an API-driven pipeline where reliability and knobs are the real product

This guide is built for teams who want to decide fast. You’ll get:

- a reality-checked summary of what public sources claim

- a decision matrix you can use in 60 seconds

- a minimum viable evaluation (MVE) you can run fast

- a failure-mode playbook (symptom → fastest fix)

Quick glossary (so “Kling 3.0 vs HappyHorse 1.0” threads make sense)

If you read Kling 3.0 vs HappyHorse 1.0 discussions, people often mix model names, endpoints, and evaluation terms. Here’s a fast glossary you can reuse in any AI video model comparison:

- Kling AI 3.0: the Kling 3.0 family (Feb 2026 release).

- HappyHorse-1.0: the model name used by providers.

- text-to-video model: prompt-only generation.

- image-to-video model: animate a still image.

- reference-to-video: use references to reduce identity drift.

- Elo ranking: blind preference signal (helpful, not sufficient).

- duration limit: seconds-per-clip cap that forces stitching.

- prompt drift / identity drift: the failures you must score.

Start with the only question that matters: what are you shipping?

The fastest way to resolve the Kling 3.0 vs HappyHorse 1.0 debate is to define acceptance gates before you generate anything.

Here are the five gates that matter in real pipelines.

Acceptance gate #1: consistency (does it stay the same across time?)

In a Kling 3.0 comparison or HappyHorse 1.0 comparison, “quality” usually means “looks sharp”. But most teams fail on temporal problems:

- identity drift (face/hair/clothes changing)

- lighting drift (scene mood shifts mid-clip)

- motion artifacts (warping in hands, teeth, text)

Acceptance gate #2: control (can you direct the shot?)

Control is not a bonus feature. It’s what turns a text-to-video model into a production tool:

- camera movement discipline (no random zooms)

- shot size discipline (wide/medium/close stays stable)

- subject intent (the model doesn’t “improvise” away from your story)

Acceptance gate #3: duration (do you need more than a micro-clip?)

Public sources often mention “up to 15s” as an upper bound for generation. That’s a big deal operationally: it tells you whether you can ship a full beat in one clip or must plan a stitching workflow.

Acceptance gate #4: audio expectation (silent-first or audio-in-the-loop?)

Audio is a workflow decision:

- silent-first: generate video, then add audio in post (fast to iterate visuals; slower to ship final)

- audio-in-the-loop: generate video + audio together (fewer post steps; higher expectations for sync)

Kling AI publicly positions Kling 3.0 as having native audio generation tied to its video workflow (more on that below).

Acceptance gate #5: integration & economics (API, endpoints, cost predictability)

Even the best AI video model comparison is incomplete without the boring parts:

- do you have API access or only a consumer UI?

- do you get endpoints for T2V, I2V, reference-to-video, and editing?

- can you estimate cost-per-minute and iteration count?

- can you standardize settings across providers?

What public sources actually claim (and what they don’t)

To keep this Kling 3.0 vs HappyHorse 1.0 guide grounded, here’s what the public sources say—separated into “announced capability” vs “what you still must test yourself”.

Kling AI 3.0 claims at-a-glance

Kuaishou’s Kling AI 3.0 announcement (Feb 5, 2026) positions Kling AI 3.0 as a set of models (Video 3.0 / Video 3.0 Omni / Image 3.0 / Image 3.0 Omni) and highlights:

- improved consistency and realism

- up to ~15 seconds per generation

- native audio generation (video + audio together)

- a unified multimodal architecture

- multi-shot storyboarding (notably for the “Omni” model)

Source: Kuaishou IR press release (Kling AI 3.0 launch): https://ir.kuaishou.com/news-releases/news-release-details/kling-ai-launches-30-model-ushering-era-where-everyone-can-be

What this doesn’t guarantee:

- that your specific character remains consistent across your prompts

- that audio sync meets your brand threshold (lip-sync, timing, style)

It suggests Kling AI 3.0 is optimized for narrative control and audiovisual completeness.

HappyHorse 1.0 claims at-a-glance

HappyHorse 1.0 (often written as HappyHorse-1.0) is described publicly as a top-ranked AI video model on Artificial Analysis, with distribution via API partners.

Two useful public anchors:

-

A WSJ report (Apr 10, 2026) frames HappyHorse 1.0 as a new Alibaba model topping a global ranking shortly after debut.

Source: https://www.wsj.com/tech/ai/alibabas-new-ai-video-generation-model-tops-global-ranking-after-debut-801fe3f7 -

A PRNewswire release (Apr 27, 2026) announces fal as an official API partner, with endpoints for text-to-video, image-to-video, reference-to-video, and video editing.

Source: https://www.prnewswire.com/news-releases/fal-launches-happyhorse-1-0--the-1-ranked-ai-video-model-as-official-api-partner-302755003.html

What this doesn’t guarantee:

- that the #1 ranking maps to your target scenes (product demos, humans, faces, motion)

- that your provider’s parameters match the configuration used in any public demo

It does suggest HappyHorse-1.0 is designed for API-first usage with multiple endpoints.

Leaderboard reality check: Elo is useful, but only for one slice of truth

Leaderboards like Artificial Analysis Video Arena are useful for one thing: blind preference at scale. If lots of people choose one output over another, that’s a signal about perceived quality.

But it’s not a substitute for a production evaluation of Kling 3.0 vs HappyHorse 1.0.

Here are the three ways teams misread rankings.

Misread #1: “#1 means it’s best for my workflow”

A model can win blind preference comparisons and still be painful operationally:

- inconsistent character identity across iterations

- weak shot control (random camera behavior)

- fragile performance in “boring” commercial scenes (product, studio lighting, clean materials)

Misread #2: “The leaderboard prompt is my prompt”

Leaderboard prompts are often optimized for visual “wow”. Production prompts are optimized for:

- brand-safe content

- consistent subject behavior

- repeatable camera motion

- predictable output format

If you use Artificial Analysis as a signal, treat it as one input—not the answer.

Decision matrix (pick a default in 60 seconds for Kling 3.0 vs HappyHorse 1.0)

Here’s a pragmatic Kling 3.0 vs HappyHorse 1.0 decision matrix. It’s not “who wins overall”. It’s “what should we default to for our next sprint?” This is the fastest way to turn HappyHorse 1.0 vs Kling 3.0 into an operational choice.

| Your priority | Pick Kling AI 3.0 when… | Pick HappyHorse-1.0 when… |

|---|---|---|

| Consistency in narrative clips | You care about coherent storytelling beats and multi-shot sequencing | You primarily care about single-clip best-case output and will validate consistency with your own harness |

| Shot control & storyboards | You want a model positioned around multi-shot storyboarding and direction | You prefer endpoint-driven generation and will build control via references + prompt specs |

| Audio in the loop | You want native audio generation as part of the generation workflow | You’re OK with silent-first or you will attach audio later in your pipeline |

| API integration | Your workflow is still UI-first and you want a “model product” that’s evolving quickly | You want a clean API story with multiple endpoints (T2V/I2V/reference/edit) from a provider partner |

| Procurement & budgeting | You can tolerate fuzzier pricing signals and will budget by iteration limits | You want clearer provider pricing signals and can estimate cost-per-minute per provider |

Minimum viable evaluation (MVE): a 30-minute test harness

If you only do one thing after reading this AI video model comparison, do this: run the same three tests on both models and score them with the same rubric. This makes your Kling 3.0 vs HappyHorse 1.0 conclusion defensible.

How to run the test (fast)

- Pick one aspect ratio you ship most (9:16 or 16:9).

- Use the same duration setting if available (don’t compare 4s vs 15s).

- Score quickly. Don’t “prompt your way into a win” on the first pass.

Test prompt set (3 scenes designed to reveal failures)

These are designed to expose drift, camera chaos, and audiovisual mismatch—exactly where teams feel pain in Kling 3.0 vs HappyHorse 1.0 and every other AI video model comparison.

Test 1: “Commercial studio product” (reveals realism + stability)

Goal: consistent materials, controlled lighting, no random camera.

Product demo in a clean bright studio. A single smartphone on a minimal pedestal.

Camera: slow controlled orbit, no sudden zoom, no shaky motion. Keep framing stable.

Lighting: softbox key light, gentle reflections, no flicker. Background: plain gradient.

No text overlays, no logos, no extra products.

Test 2: “Human performance + subtle motion” (reveals identity drift)

Goal: face/hair/clothing stability; natural motion without warping.

A person standing in a softly lit room, speaking a short line while gesturing naturally.

Camera: locked-off, medium shot, no zoom. Keep the face stable and realistic.

Avoid artifacts in hands, teeth, and eyes. No text, no brand marks.

Test 3: “Two-beat micro story” (reveals multi-shot control)

Goal: can the model maintain continuity across beats?

Two-beat scene: (1) close-up of a coffee cup being placed on a desk, (2) medium shot of a person sitting down and opening a laptop.

Keep the scene consistent: same room, same lighting, same person.

Camera moves should be smooth and intentional. No random cuts.

Scoring rubric (simple, useful)

Score each test from 1–5 on:

- Consistency: identity/lighting/props stable

- Control: camera does what you asked (no chaos)

- Artifacts: hands/face/text stability; no warping

- Audio fit (if you enable audio): does it help or hurt shipping speed?

Then add one production score:

- Iteration cost: how many tries until you got a “ship-ready” clip?

This is the fastest way to decide HappyHorse 1.0 vs Kling 3.0 for your actual workload.

The one-change-per-iteration rule

If you iterate, change only one thing at a time:

- camera language

- subject description

- lighting constraint

- motion constraint

- reference input

Otherwise you can’t tell what improved (and you’ll end up arguing preferences again).

Failure-mode playbook (symptom → fastest fix)

Here are the failures you’ll hit most when comparing a text-to-video model or image-to-video model in production—especially when you do a real Kling 3.0 vs HappyHorse 1.0 bake-off.

Symptom: identity drift (face/hair/outfit changes)

Fast fixes:

- reduce prompt entropy (fewer competing descriptors)

- use a reference image for the character (if your provider supports reference-to-video)

- move from “cinematic” adjectives to concrete constraints (shot size, lighting, wardrobe)

Symptom: camera chaos (random zoom, shake, unwanted cuts)

Fast fixes:

- specify a single camera behavior: “locked-off” or “slow controlled orbit”

- remove language that implies action-movie motion (handheld, fast, dramatic)

- restate “no sudden zoom, no shaky motion” as a hard constraint

Symptom: motion artifacts (hands/teeth/text warping)

Fast fixes:

- keep the action simple in early iterations

- avoid tiny high-frequency patterns (dense fabric, busy signage)

- if your provider supports editing, fix the near-miss instead of regenerating from scratch

Symptom: audio mismatch (timing feels off, lip-sync misses)

Fast fixes:

- treat audio as a gate: either enable it intentionally or keep the workflow silent-first

- shorten dialogue and reduce mouth complexity (don’t test with fast speech first)

- validate whether the model/provider supports lip-sync explicitly vs generic audio generation

Procurement checklist (teams + budgets)

Before you commit to either side of Kling 3.0 vs HappyHorse 1.0, ask:

- Are you evaluating the model, or a provider implementation of the model?

- Do you have the endpoints you need (T2V, I2V, reference, edit)?

- Can you standardize settings across teammates (so results are comparable)?

- Can you budget by cost-per-minute and expected iteration count?

The “provider fragmentation” trap

The same model name can show up across providers with different defaults, limits, and pricing. Keep one harness and re-run it whenever you switch.

Recommended workflows (safe defaults)

To make Kling 3.0 vs HappyHorse 1.0 actionable, here are safe defaults you can use immediately. Treat them as templates for any Kling 3.0 comparison or HappyHorse 1.0 comparison.

Workflow A: short-form ad loop

Run the same 3-test harness for Kling 3.0 vs HappyHorse 1.0, then iterate one change at a time. If your bottleneck is control and audiovisual completeness, start with Kling AI 3.0. If your bottleneck is endpoint-driven automation, start with HappyHorse-1.0.

Workflow B: product-demo loop

Keep a stable prompt template and compare Kling AI 3.0 vs HappyHorse-1.0 on the exact same product scene each week. Treat it as an AI video model comparison you can repeat, not a one-off demo.

Search variants

If your team searches in different ways, these are common variants that all refer to the same topic:

- Kling 3.0 vs HappyHorse 1.0

- HappyHorse 1.0 vs Kling 3.0

- Kling AI 3.0

- HappyHorse-1.0

- AI video model comparison

- text-to-video model

- image-to-video model

Common query cluster: Kling 3.0 vs HappyHorse 1.0; HappyHorse 1.0 vs Kling 3.0; Kling AI 3.0; HappyHorse-1.0; Kling 3.0 comparison; HappyHorse 1.0 comparison.

If you want a one-sentence framing: Kling 3.0 vs HappyHorse 1.0 is an AI video model comparison between Kling AI 3.0 and HappyHorse-1.0 across text-to-video model and image-to-video model workflows.

Keyword recap (for editors): Kling AI 3.0, Kling AI 3.0, Kling AI 3.0, Kling AI 3.0, Kling AI 3.0; HappyHorse-1.0, HappyHorse-1.0, HappyHorse-1.0, HappyHorse-1.0, HappyHorse-1.0.

Verdict: pick a default, then validate with your own clips

There isn’t a universal winner in Kling 3.0 vs HappyHorse 1.0.

The production-first approach is:

- pick a default based on your bottleneck (control, audio, API, or cost predictability)

- run the 30-minute harness

- lock a workflow template so your team stops re-litigating the decision

Optional: use our tools to run the same evaluation loop

Start here:

Audit receipt (EN)

Target: 1800 - 2500 words, density 3.0% - 3.4% (core + variants combined).

Run node scripts/blog-engine-validate.mjs kling-3-0-vs-happyhorse-1-0 to produce the final counts.

Kling 3.0 vs Runway Gen-4.5: The Ultimate AI Video Showdown (2026 Comparison)

A comprehensive 2026 comparison. We test Kling 3.0 vs Runway Gen-4.5 (Flagship) and Kling 2.6 vs Gen-4 (Standard). Discover which AI video generator offers the best daily free credits.

Kling 3 vs Kling 2.6: The Ultimate Comparison & User Guide (2026)

Kling 3 Video is here with Omni models and native lip-sync. How does it compare to Kling 2.6? We break down the differences, features, and which Klingai tool you should choose.

GPT Image 2 360 VR Background: A Deliverable Workflow for Seamless Equirectangular Panoramas

Make a VR-ready 360 background you can actually share: a deliverable-first workflow for GPT Image 2 360 panorama generation, seam fixes, 2:1 equirectangular constraints, and viewer QA.

Kling 3 4K vs Pro (1080p): When 4K Is Worth It-and When It's Not

A practical decision framework for choosing Kling 3 4K vs Pro (1080p): when 4K improves detail, motion, and compression-and when 1080p is the smarter default.

Kling 3 4K Workflow: Prompts, Shot Planning, and Export Settings That Actually Hold Up

A repeatable Kling 3 4K workflow to get usable deliverables: two-pass iteration, prompt templates, safe complexity rules, and export guidance to survive platform recompression.

Kling 3 Native 4K: What It Means for Quality, Motion, Compression, and Real-World Use

Learn what Kling 3 native 4K changes vs 1080p: sharper detail, cleaner motion, fewer artifacts, and when 4K is actually worth it.

HappyHorse AI Video Generator: What the New Model Can Do

Discover HappyHorse, a new AI video generation model with text-to-video, image-to-video, video-to-video, native audio, and creator-friendly workflows.

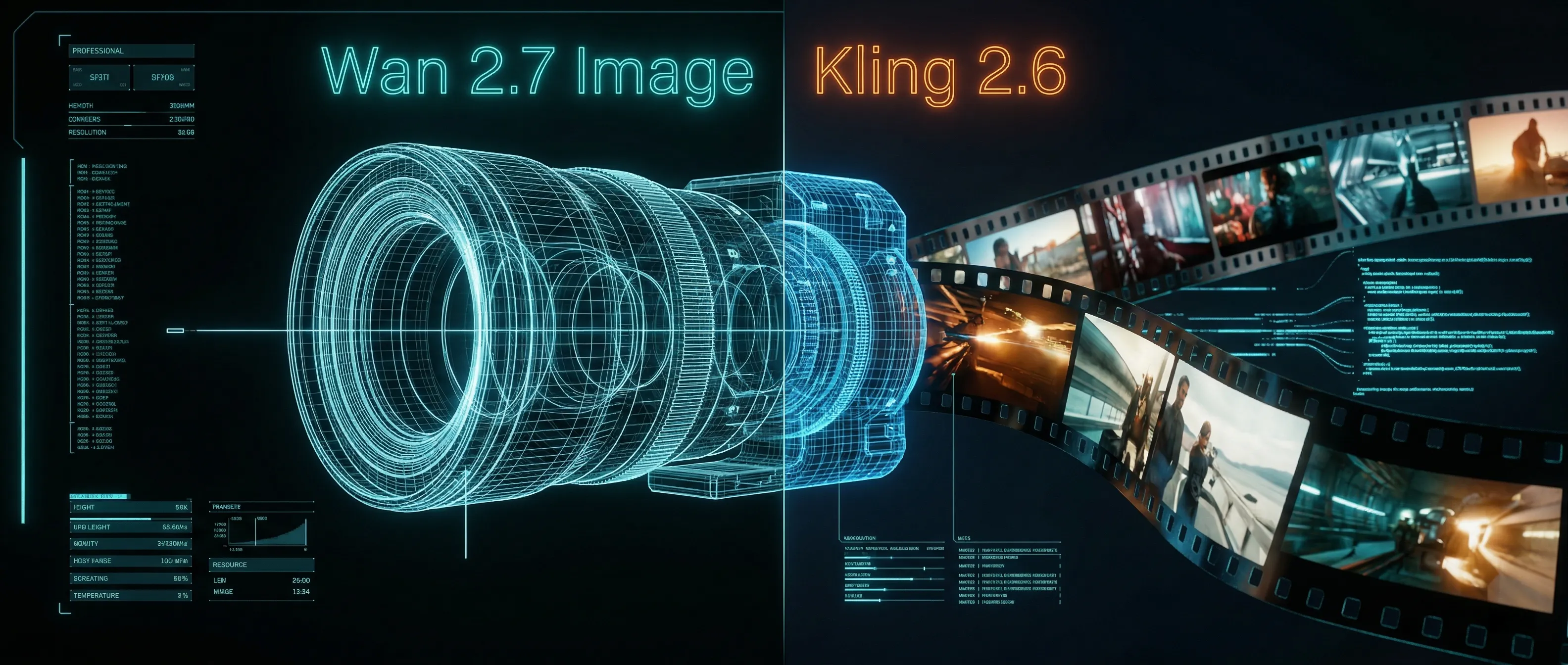

Wan 2.7 Image Meets Kling 2.6: The Ultimate AI Visual Workflow

Discover how the new Wan 2.7 Image model's advanced editing and 3K text rendering capabilities create the perfect asset pipeline for Kling 2.6 video generation.