The Next Generation of Generation: Unpacking the Wan 2.7 Upgrade

The landscape of digital content creation is preparing for a monumental shift this March.

For creators who felt restricted by early AI limitations, the highly anticipated Wan 2.7 Video release marks a turning point. It is no longer just about generating random clips; it is about building a professional, controllable ecosystem. By seamlessly blending the features of the upcoming release with the lessons learned from previous versions, the Wan ai 2.7 system is redefining how we approach digital storytelling.

Overcoming the Limits of 2.6

If you used the 2.6 version, you likely encountered the "blind box" dilemma—typing a prompt and hoping the AI wouldn't distort your character's face or violate the laws of physics.

The Wan 2.7 ai Video engine completely abandons pure text-guessing. Instead of relying solely on the old Wan 2.7 text to video framework, it introduces a multi-modal injection system. You can now use images to lock in art styles or audio to dictate the rhythm, making the Wan 2.7 image to video capability incredibly precise.

Furthermore, the frustrating 15-second fragment limit is gone. Through the innovative Wan2.7 continue filming technology, the engine can logically extend your existing footage, generating infinite long-take sequences that strictly follow narrative logic.

The New Four-Stage Professional Workflow

Adopting the wanai2.7 platform means upgrading to a studio-grade workflow:

- Pre-Production Precision: You can strictly define your opening and closing shots using First & Last Frame Control, ensuring your storyboard is executed perfectly.

- Dynamic Production: Need to animate a comic? The 9-grid image transformation turns static panels into fluid motion.

- Surgical Editing: Unlike basic style transfers, Wan2.7 allows for instruction-based directed editing. Using specific @tags, you can replace elements or characters without disrupting the original lighting or camera trajectory.

- Audio-Visual Mastery: The Wan 2.7 ai video generator aligns lip movements to dialogue with millisecond accuracy and synchronizes physical actions to music beats.

Best of all, commercial safety is guaranteed. Paid users retain 100% ownership of their generated content, making the Wanai 2.7 Video tool entirely safe for client delivery.

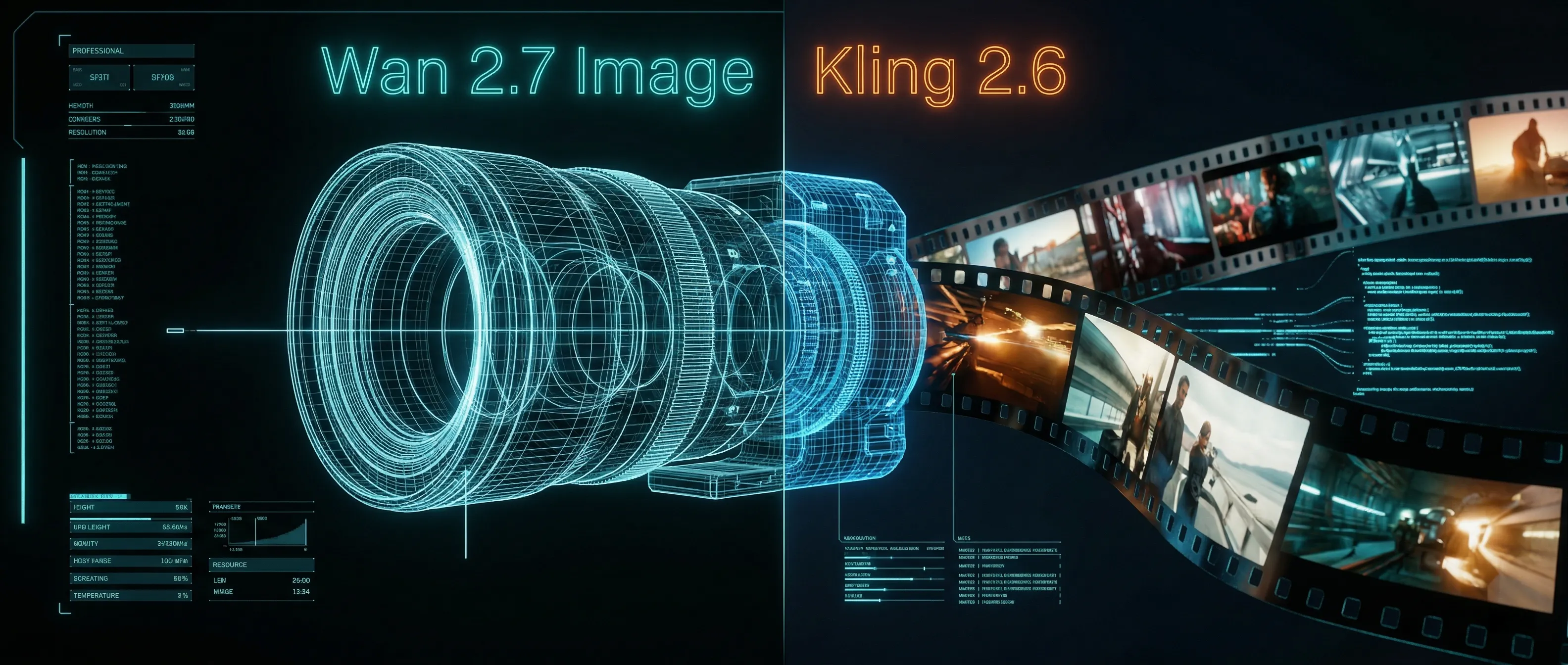

Wan 2.7 Image Meets Kling 2.6: The Ultimate AI Visual Workflow

Discover how the new Wan 2.7 Image model's advanced editing and 3K text rendering capabilities create the perfect asset pipeline for Kling 2.6 video generation.

Z-Image Turbo Guide: Running Alibaba''s 6B Beast in ComfyUI (Vs. FLUX)

Forget 24GB VRAM. Alibaba''s Z-Image Turbo (6B) delivers photorealistic results and perfect Chinese text in just 8 steps. Here is your complete ComfyUI workflow guide.

Mastering Kling Motion Control: The Ultimate Guide to AI Digital Puppetry (2026)

A deep dive into Kling Motion Control features. Learn to use Character Orientation modes, fix common errors, and master the workflow for cinematic AI video production.

Kling 2.6 & Niji 7 Workflow: How to Create Viral AI Anime Dramas (2026 Guide)

Master the ultimate AI anime workflow combining Niji 7's visuals with Kling 2.6's native audio and motion control. A step-by-step guide for creating viral manga dramas.

5 Secret Prompts for Hollywood-Style Cinematic Shots

Struggling with flat lighting? Use these copy-paste prompt formulas to master depth of field and dynamic camera angles.

Veo 4 vs Seedance 2.1: Why the Next AI Video War May Be About Cost, Not Cinematic Quality

Seedance 2.1 is reportedly close, Veo 4 is widely expected around Google I/O, and Gemini Omni Flash has appeared inside Flow. The real question is no longer who looks most cinematic, but who lowers the cost of stable, usable output.

Seedance 2.1 May Be Coming Soon: Reported 20% Quality Gain, Cheaper Tier, and What Creators Should Watch

Seedance 2.1 is reportedly close to launch, with claims of a 20% quality improvement and a cheaper Seedance 2.0 tier. Here is what seems known, what is still unconfirmed, and why the update matters.

Kling 3.0 Stadium Fan Cam Trend: How to Prompt a Real Broadcast Look

A practical prompt blueprint for the Kling 3.0 stadium fan cam trend: broadcast realism cues, failure fixes, and a safe comparison plan.