The Next Generation of Generation: Unpacking the Wan 2.7 Upgrade

The landscape of digital content creation is preparing for a monumental shift this March.

For creators who felt restricted by early AI limitations, the highly anticipated Wan 2.7 Video release marks a turning point. It is no longer just about generating random clips; it is about building a professional, controllable ecosystem. By seamlessly blending the features of the upcoming release with the lessons learned from previous versions, the Wan ai 2.7 system is redefining how we approach digital storytelling.

Overcoming the Limits of 2.6

If you used the 2.6 version, you likely encountered the "blind box" dilemma—typing a prompt and hoping the AI wouldn't distort your character's face or violate the laws of physics.

The Wan 2.7 ai Video engine completely abandons pure text-guessing. Instead of relying solely on the old Wan 2.7 text to video framework, it introduces a multi-modal injection system. You can now use images to lock in art styles or audio to dictate the rhythm, making the Wan 2.7 image to video capability incredibly precise.

Furthermore, the frustrating 15-second fragment limit is gone. Through the innovative Wan2.7 continue filming technology, the engine can logically extend your existing footage, generating infinite long-take sequences that strictly follow narrative logic.

The New Four-Stage Professional Workflow

Adopting the wanai2.7 platform means upgrading to a studio-grade workflow:

- Pre-Production Precision: You can strictly define your opening and closing shots using First & Last Frame Control, ensuring your storyboard is executed perfectly.

- Dynamic Production: Need to animate a comic? The 9-grid image transformation turns static panels into fluid motion.

- Surgical Editing: Unlike basic style transfers, Wan2.7 allows for instruction-based directed editing. Using specific @tags, you can replace elements or characters without disrupting the original lighting or camera trajectory.

- Audio-Visual Mastery: The Wan 2.7 ai video generator aligns lip movements to dialogue with millisecond accuracy and synchronizes physical actions to music beats.

Best of all, commercial safety is guaranteed. Paid users retain 100% ownership of their generated content, making the Wanai 2.7 Video tool entirely safe for client delivery.

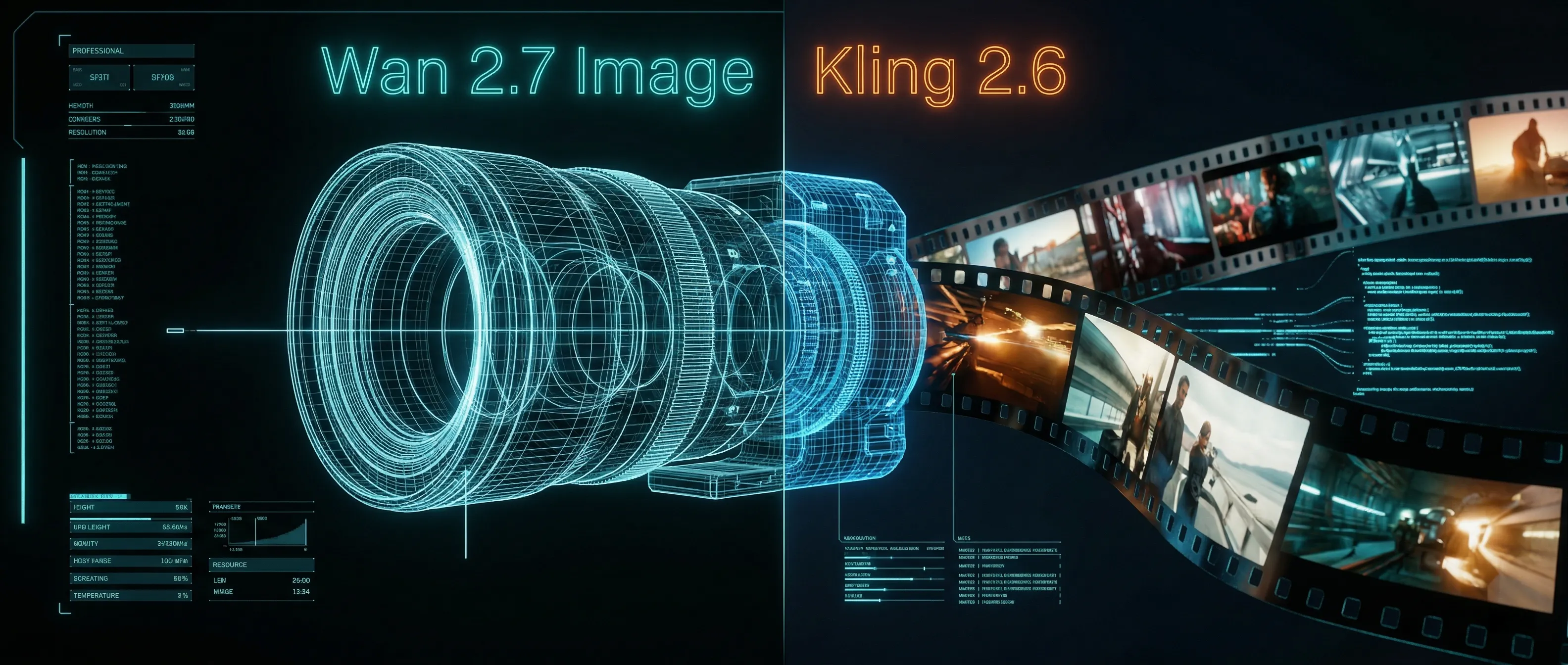

Wan 2.7 Image Meets Kling 2.6: The Ultimate AI Visual Workflow

探索全新 Wan 2.7 Image 模型的高级编辑和 3K 文本渲染功能如何为 Kling 2.6 视频生成打造完美的资产工作流。

Z-Image Turbo 指南:在 ComfyUI 中运行阿里的 6B 性能怪兽 (对比 FLUX)

忘掉 24GB 显存吧。阿里的 Z-Image Turbo (6B) 仅需 8 步即可提供照片级的画质和完美的中文文字渲染。这是您的完整 ComfyUI 工作流指南。

Kling Motion Control 完全指南:从原理到实战的数字操纵手册 (2026)

深度解析 Kling Motion Control 双模式工作原理与核心算法。学习如何精准控制角色朝向、运镜技巧,以及解决"未检测到上半身"等常见报错的完整避坑指南和最佳实践。

Kling 3 4k Vs Pro

SEO-friendly description for search engines

Kling 3 4k Workflow

SEO-friendly description for search engines

Kling 3 Native 4k

SEO-friendly description for search engines

HappyHorse AI 视频生成模型:这款新模型能做什么

了解 HappyHorse 这款新的视频生成模型,支持 text-to-video、image-to-video、video-to-video、原生音频与更适合创作者的工作流。

音画同步实战指南:Kling Video 3.0 Omni 对口型深度教程

Kling Video 3.0 Omni 原生视听能力完整攻略。学习如何实现精准对口型、音画同步直出、复杂情感再现,打造专业级AI视频内容。