Kling 3.0 vs HappyHorse 1.0: comparación enfocada en producción (calidad, control, audio, API)

Kling 3.0 vs HappyHorse 1.0: how to choose fast (without trusting hype)

If you’re searching Kling 3.0 vs HappyHorse 1.0, you probably want a simple answer: which model is “better”?

In production, “better” depends on what you’re shipping:

- a short-form ad loop (TikTok / Reels / Shorts)

- a product-demo clip you’ll iterate weekly

- a creator workflow where audio and pacing matter

- an API-driven pipeline where reliability and knobs are the real product

This guide is built for teams who want to decide fast. You’ll get:

- a reality-checked summary of what public sources claim

- a decision matrix you can use in 60 seconds

- a minimum viable evaluation (MVE) you can run fast

- a failure-mode playbook (symptom → fastest fix)

Quick glossary (so “Kling 3.0 vs HappyHorse 1.0” threads make sense)

If you read Kling 3.0 vs HappyHorse 1.0 discussions, people often mix model names, endpoints, and evaluation terms. Here’s a fast glossary you can reuse in any AI video model comparison:

- Kling AI 3.0: the Kling 3.0 family (Feb 2026 release).

- HappyHorse-1.0: the model name used by providers.

- text-to-video model: prompt-only generation.

- image-to-video model: animate a still image.

- reference-to-video: use references to reduce identity drift.

- Elo ranking: blind preference signal (helpful, not sufficient).

- duration limit: seconds-per-clip cap that forces stitching.

- prompt drift / identity drift: the failures you must score.

Start with the only question that matters: what are you shipping?

The fastest way to resolve the Kling 3.0 vs HappyHorse 1.0 debate is to define acceptance gates before you generate anything.

Here are the five gates that matter in real pipelines.

Acceptance gate #1: consistency (does it stay the same across time?)

In a Kling 3.0 comparison or HappyHorse 1.0 comparison, “quality” usually means “looks sharp”. But most teams fail on temporal problems:

- identity drift (face/hair/clothes changing)

- lighting drift (scene mood shifts mid-clip)

- motion artifacts (warping in hands, teeth, text)

Acceptance gate #2: control (can you direct the shot?)

Control is not a bonus feature. It’s what turns a text-to-video model into a production tool:

- camera movement discipline (no random zooms)

- shot size discipline (wide/medium/close stays stable)

- subject intent (the model doesn’t “improvise” away from your story)

Acceptance gate #3: duration (do you need more than a micro-clip?)

Public sources often mention “up to 15s” as an upper bound for generation. That’s a big deal operationally: it tells you whether you can ship a full beat in one clip or must plan a stitching workflow.

Acceptance gate #4: audio expectation (silent-first or audio-in-the-loop?)

Audio is a workflow decision:

- silent-first: generate video, then add audio in post (fast to iterate visuals; slower to ship final)

- audio-in-the-loop: generate video + audio together (fewer post steps; higher expectations for sync)

Kling AI publicly positions Kling 3.0 as having native audio generation tied to its video workflow (more on that below).

Acceptance gate #5: integration & economics (API, endpoints, cost predictability)

Even the best AI video model comparison is incomplete without the boring parts:

- do you have API access or only a consumer UI?

- do you get endpoints for T2V, I2V, reference-to-video, and editing?

- can you estimate cost-per-minute and iteration count?

- can you standardize settings across providers?

What public sources actually claim (and what they don’t)

To keep this Kling 3.0 vs HappyHorse 1.0 guide grounded, here’s what the public sources say—separated into “announced capability” vs “what you still must test yourself”.

Kling AI 3.0 claims at-a-glance

Kuaishou’s Kling AI 3.0 announcement (Feb 5, 2026) positions Kling AI 3.0 as a set of models (Video 3.0 / Video 3.0 Omni / Image 3.0 / Image 3.0 Omni) and highlights:

- improved consistency and realism

- up to ~15 seconds per generation

- native audio generation (video + audio together)

- a unified multimodal architecture

- multi-shot storyboarding (notably for the “Omni” model)

Source: Kuaishou IR press release (Kling AI 3.0 launch): https://ir.kuaishou.com/news-releases/news-release-details/kling-ai-launches-30-model-ushering-era-where-everyone-can-be

What this doesn’t guarantee:

- that your specific character remains consistent across your prompts

- that audio sync meets your brand threshold (lip-sync, timing, style)

It suggests Kling AI 3.0 is optimized for narrative control and audiovisual completeness.

HappyHorse 1.0 claims at-a-glance

HappyHorse 1.0 (often written as HappyHorse-1.0) is described publicly as a top-ranked AI video model on Artificial Analysis, with distribution via API partners.

Two useful public anchors:

-

A WSJ report (Apr 10, 2026) frames HappyHorse 1.0 as a new Alibaba model topping a global ranking shortly after debut.

Source: https://www.wsj.com/tech/ai/alibabas-new-ai-video-generation-model-tops-global-ranking-after-debut-801fe3f7 -

A PRNewswire release (Apr 27, 2026) announces fal as an official API partner, with endpoints for text-to-video, image-to-video, reference-to-video, and video editing.

Source: https://www.prnewswire.com/news-releases/fal-launches-happyhorse-1-0--the-1-ranked-ai-video-model-as-official-api-partner-302755003.html

What this doesn’t guarantee:

- that the #1 ranking maps to your target scenes (product demos, humans, faces, motion)

- that your provider’s parameters match the configuration used in any public demo

It does suggest HappyHorse-1.0 is designed for API-first usage with multiple endpoints.

Leaderboard reality check: Elo is useful, but only for one slice of truth

Leaderboards like Artificial Analysis Video Arena are useful for one thing: blind preference at scale. If lots of people choose one output over another, that’s a signal about perceived quality.

But it’s not a substitute for a production evaluation of Kling 3.0 vs HappyHorse 1.0.

Here are the three ways teams misread rankings.

Misread #1: “#1 means it’s best for my workflow”

A model can win blind preference comparisons and still be painful operationally.

Misread #2: “The leaderboard prompt is my prompt”

Leaderboard prompts are often optimized for visual “wow”. Production prompts are optimized for brand-safe, repeatable constraints.

Misread #3: “It ranked high, so I don’t need a test harness”

You still need your own MVE test because your pipeline constraints are unique.

Decision matrix (pick a default in 60 seconds for Kling 3.0 vs HappyHorse 1.0)

Here’s a pragmatic Kling 3.0 vs HappyHorse 1.0 decision matrix.

| Your priority | Pick Kling AI 3.0 when… | Pick HappyHorse-1.0 when… |

|---|---|---|

| Consistency in narrative clips | You care about coherent storytelling beats and multi-shot sequencing | You primarily care about single-clip best-case output and will validate consistency with your own harness |

| Shot control & storyboards | You want a model positioned around multi-shot storyboarding and direction | You prefer endpoint-driven generation and will build control via references + prompt specs |

| Audio in the loop | You want native audio generation as part of the generation workflow | You’re OK with silent-first or you will attach audio later in your pipeline |

| API integration | Your workflow is still UI-first and you want a “model product” that’s evolving quickly | You want a clean API story with multiple endpoints (T2V/I2V/reference/edit) from a provider partner |

| Procurement & budgeting | You can tolerate fuzzier pricing signals and will budget by iteration limits | You want clearer provider pricing signals and can estimate cost-per-minute per provider |

Minimum viable evaluation (MVE): a 30-minute test harness

If you only do one thing after reading this AI video model comparison, do this: run the same three tests on both models and score them with the same rubric. This makes your Kling 3.0 vs HappyHorse 1.0 conclusion defensible.

How to run the test (fast)

- Pick one aspect ratio you ship most (9:16 or 16:9).

- Use the same duration setting if available (don’t compare 4s vs 15s).

- Score quickly. Don’t “prompt your way into a win” on the first pass.

Test prompt set (3 scenes designed to reveal failures)

Test 1: “Commercial studio product” (reveals realism + stability)

Product demo in a clean bright studio. A single smartphone on a minimal pedestal.

Camera: slow controlled orbit, no sudden zoom, no shaky motion. Keep framing stable.

Lighting: softbox key light, gentle reflections, no flicker. Background: plain gradient.

No text overlays, no logos, no extra products.

Test 2: “Human performance + subtle motion” (reveals identity drift)

A person standing in a softly lit room, speaking a short line while gesturing naturally.

Camera: locked-off, medium shot, no zoom. Keep the face stable and realistic.

Avoid artifacts in hands, teeth, and eyes. No text, no brand marks.

Test 3: “Two-beat micro story” (reveals multi-shot control)

Two-beat scene: (1) close-up of a coffee cup being placed on a desk, (2) medium shot of a person sitting down and opening a laptop.

Keep the scene consistent: same room, same lighting, same person.

Camera moves should be smooth and intentional. No random cuts.

Scoring rubric (simple, useful)

Score each test from 1–5 on:

- Consistency

- Control

- Artifacts

- Audio fit (if you enable audio)

Then add one production score:

- Iteration cost

The one-change-per-iteration rule

If you iterate, change only one thing at a time.

Failure-mode playbook (symptom → fastest fix)

Symptom: identity drift (face/hair/outfit changes)

Fast fixes: reduce prompt entropy, use reference-to-video, use concrete constraints.

Symptom: camera chaos (random zoom, shake, unwanted cuts)

Fast fixes: lock a single camera behavior, remove handheld language, restate constraints.

Symptom: motion artifacts (hands/teeth/text warping)

Fast fixes: simplify action, avoid high-frequency patterns, use editing when available.

Symptom: audio mismatch (timing feels off, lip-sync misses)

Fast fixes: treat audio as a gate, shorten dialogue, validate lip-sync support.

Procurement checklist (teams + budgets)

Before you commit: model vs provider, endpoints, standardization, and budgeting.

The “provider fragmentation” trap

Same model name ≠ same defaults. Keep one harness and re-run it whenever you switch providers.

Recommended workflows (safe defaults)

Workflow A: short-form ad loop

Run the same harness, then iterate one change at a time.

Workflow B: product-demo loop

Keep a stable template and compare weekly.

Search variants

Kling 3.0 vs HappyHorse 1.0; HappyHorse 1.0 vs Kling 3.0; Kling AI 3.0; HappyHorse-1.0; AI video model comparison; text-to-video model; image-to-video model.

Verdict: pick a default, then validate with your own clips

No universal winner. Pick a default, run MVE, then standardize.

Optional: use our tools to run the same evaluation loop

Start here:

Audit receipt (ES)

Facts, URLs, and structure are locked to the EN master.

After localization, run: node scripts/audit-blog.mjs kling-3-0-vs-happyhorse-1-0 --locales es

Kling 3.0 vs Runway Gen-4.5: El enfrentamiento definitivo de video IA (Comparativa 2026)

Una comparativa exhaustiva de 2026. Probamos Kling 3.0 vs Runway Gen-4.5 (Gama alta) y Kling 2.6 vs Gen-4 (Estándar). Descubre qué generador de video IA ofrece los mejores créditos diarios gratuitos.

Kling 3 vs Kling 2.6: The Ultimate Comparison & User Guide (2026)

Kling 3 Video ha llegado con modelos Omni y lip-sync nativo. ¿Cómo se compara con Kling 2.6? Analizamos las diferencias, características y qué herramienta de Klingai deberías elegir.

GPT Image 2 360 VR Background: flujo entregable para panoramas equirectangulares sin costuras

Entregable real: gpt image 2 360 panorama 2:1 equirectangular, seam fix y validacion en viewer QA.

Kling 3 4K vs Pro (1080p): cuando 4K vale la pena (y cuando no)

Marco de decision para Kling 3 4K vs Pro (1080p): cuando 4K mejora detalle, movimiento y compresion, y cuando 1080p es mejor.

Kling 3 4K workflow: prompts, plan de planos y export que aguanta de verdad

Un Kling 3 4K workflow repetible: iteracion en dos pases, templates de prompt, reglas de complejidad y export para recompression.

Kling 3 native 4K: que cambia en calidad, movimiento, compresion y uso real

Que cambia Kling 3 native 4K frente a 1080p: mas detalle, movimiento mas limpio, menos artefactos y cuando 4K vale la pena.

HappyHorse AI Video Generator: qué puede hacer el nuevo modelo

Descubre HappyHorse, un nuevo modelo de generación de video con text-to-video, image-to-video, video-to-video, audio nativo y flujos pensados para creadores.

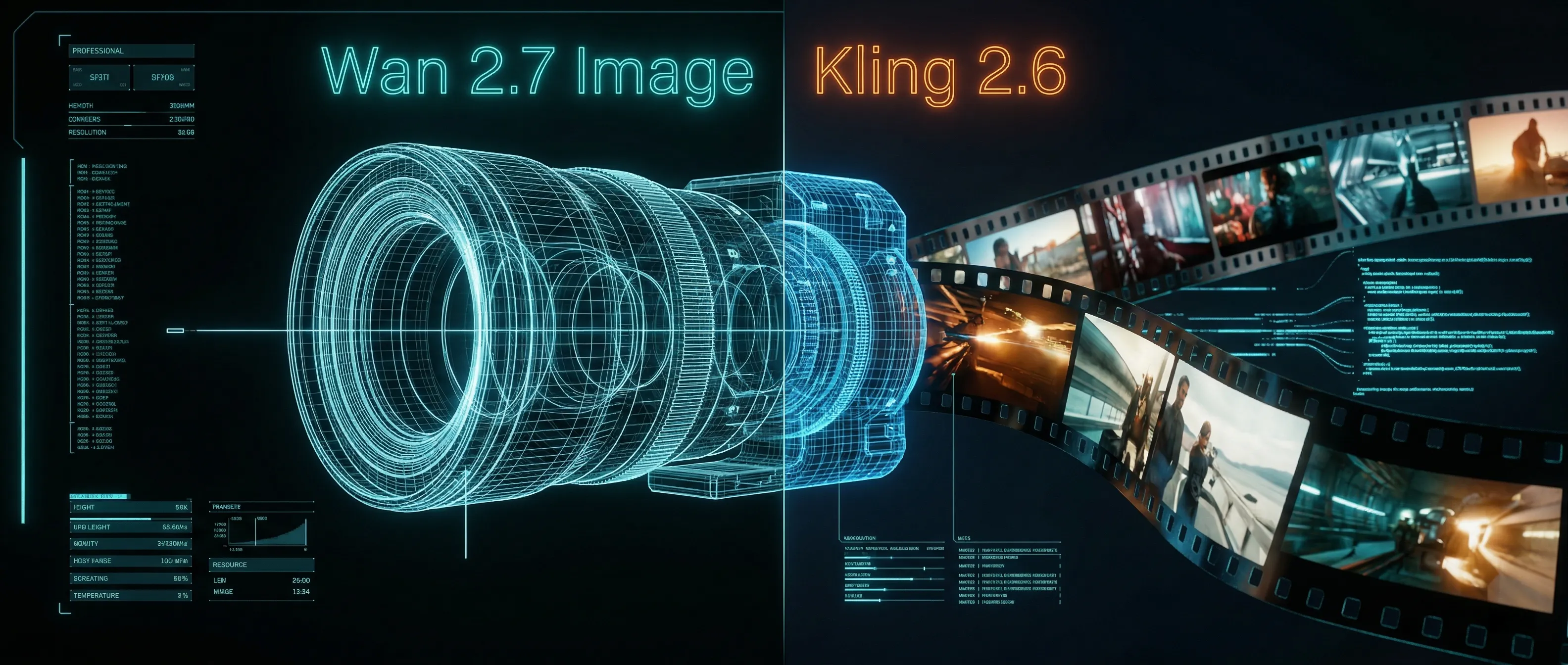

Wan 2.7 Image Meets Kling 2.6: The Ultimate AI Visual Workflow

Descubra cómo las capacidades avanzadas de edición y renderizado de texto 3K del nuevo modelo Wan 2.7 Image crean la canalización de activos perfecta para la generación de video de Kling 2.6.